- Blog

- How to find and replace formatting in word

- Best free dvd burning software for avi

- Windows pc volume booster

- Unregistered hypercam 2 for mac

- Apple mainstage 3 forbes

- Mac os 10-6-8 download free

- Mobi to epub converter online

- Microsoft powerpoint 2013 update

- Yella terra rockers ls7

- Sai baba evening aarti lyrics in english

- Waves ssl 4000 collection download

- One in a million you larry graham lyrics meaning

- Schwinn vin number

- Icloud for outlook 2010 download

- How to install bluetooth driver windows 10

- 2016 world series baseball winner

- Roblox clockwork black friday

- Waves plugins crack pro tools 11

- Taito type x2 dmac

- Image file compression software free download

- Dragon naturally speaking commands for word

- Brothers conflict game online free

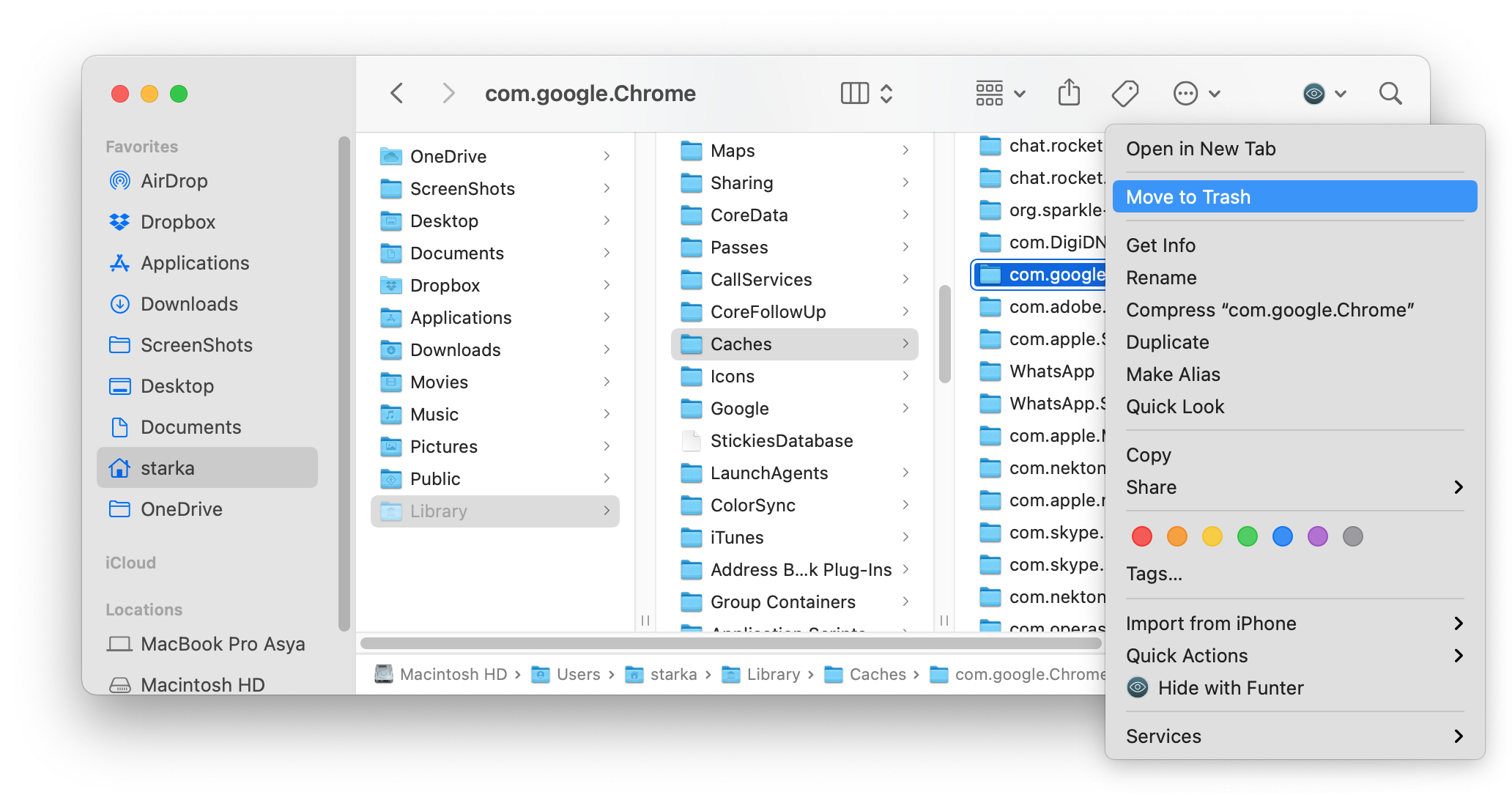

- How to clear startup disk after using parallels

- Grease original soundtrack viny

- Amd radeon hd 8570d driver windows 10 compatibility

- Watch online colors gujarati

- #HOW TO CLEAR STARTUP DISK AFTER USING PARALLELS CODE#

- #HOW TO CLEAR STARTUP DISK AFTER USING PARALLELS TRIAL#

- #HOW TO CLEAR STARTUP DISK AFTER USING PARALLELS WINDOWS 7#

Obviously you should test on your system before micro-optimizing this functionality for your.

The difference in performance may be even greater if you need to do other things to process your data as well, such as running a database query.

#HOW TO CLEAR STARTUP DISK AFTER USING PARALLELS CODE#

This test code is just doing mathematical calculations too. The performance difference is so great it even makes up for the loss of time when just reading a file. Net 4 (or above) doing the age old “read a line, process line, repeat until end of file” technique instead of “read all the lines into memory and then process”. Unfortunately I still see a lot of C# programmers and C# code running. On my system, unless someone spots a flaw in my test code, reading an entire file into an array and then processing line-by-line using a parallel loop proved significantly more beneficial than reading a line, processing a line. However, it indicates that if you really want to micro-optimize your code for speed, always pre-allocate the size of a string array when possible. This wasn’t quite so evident when just plain reading a file. Net inbuilt File.ReadAllLines() method started performing slower. The surprise for me came where each line was 10 guids in length. Those techniques always finished in less than a third (33%) of the time it took any technique processing line by line. T8 & T9, which implemented the parallel processing techniques, completely dominated. Seeing the results, there is no clear-cut winner between techniques T1 – T7. T9: ReadAllLines into String, process using Parallel.For T8: StreamReader, read into preset String, process using Parallel.For T7: as above with StringBuilder size preset T6: StreamReader, read line by line into StringBuilder, process T5: BufferedStream with buffer size preset, read line by line, process T4: BufferedStream, read line by line, process T3: StreamReader, read line by line, process Lower numbers indicate faster performance. Green cells indicate the winner(s) for that run yellow second runners up.Īll times are indicated in minutes:seconds.milliseconds format.

There was no reason to run this test multiple times because as you’ll see, there are clear winners and losers.īefore starting, my hypothesis was that I expected the techniques that read the entire file into an array, and then using parallel for loops to process all the lines would win out hands down. This was to eliminate any other background processes starting up with might detract from the test.

#HOW TO CLEAR STARTUP DISK AFTER USING PARALLELS TRIAL#

This trial was run once, waiting 5 minutes after the machine was up and running from a cold start up.

#HOW TO CLEAR STARTUP DISK AFTER USING PARALLELS WINDOWS 7#

On a Windows 7 64-bit machine with 16GB of memory using a purely 7200 rpm mechanical drive as I didn’t want the effects the memory of a “hybrid” drive or mSata card might have on the system to taint the results. Then each string is parsed character by character to determine if it’s a number and if so, so a mathematical calculation based on it.

This will benchmark many techniques to determine in C#. Net: Fastest Way to Read and Process Text Files

- Blog

- How to find and replace formatting in word

- Best free dvd burning software for avi

- Windows pc volume booster

- Unregistered hypercam 2 for mac

- Apple mainstage 3 forbes

- Mac os 10-6-8 download free

- Mobi to epub converter online

- Microsoft powerpoint 2013 update

- Yella terra rockers ls7

- Sai baba evening aarti lyrics in english

- Waves ssl 4000 collection download

- One in a million you larry graham lyrics meaning

- Schwinn vin number

- Icloud for outlook 2010 download

- How to install bluetooth driver windows 10

- 2016 world series baseball winner

- Roblox clockwork black friday

- Waves plugins crack pro tools 11

- Taito type x2 dmac

- Image file compression software free download

- Dragon naturally speaking commands for word

- Brothers conflict game online free

- How to clear startup disk after using parallels

- Grease original soundtrack viny

- Amd radeon hd 8570d driver windows 10 compatibility

- Watch online colors gujarati